Or: What happens when anyone can build anything but almost no one can judge it

I need to tell you what's happening right now, as I write this.

Thirty minutes ago, I was building a custom tool. I needed my business software to produce a report it doesn't offer—nothing exotic, just a way to see what's installed across the computers I manage for clients. The software can't do it, so I asked an AI to build me something that could.

This is normal for me now. Over the past week, I've had AI write a data-collection script, work through storage constraints, and scaffold out a small application to make that data searchable. It's been a genuine collaboration—me describing what I need, the AI generating code, me reviewing and redirecting when something's off.

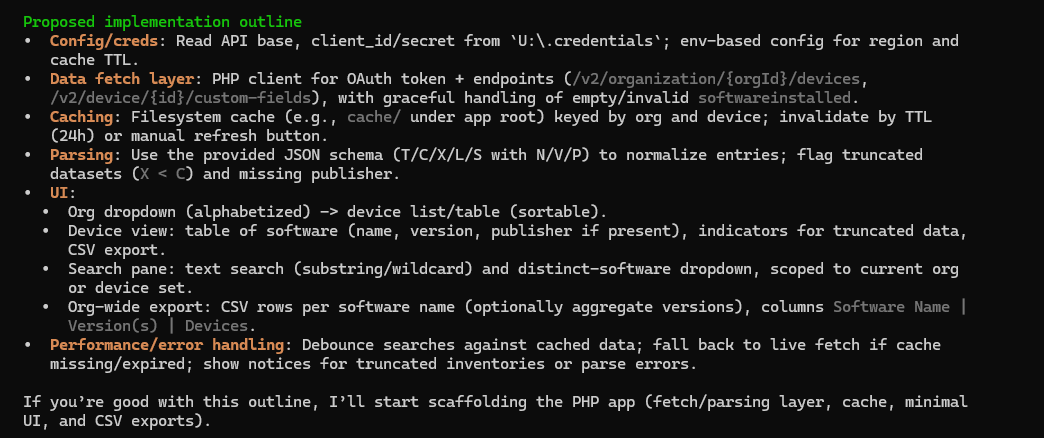

A few minutes ago, the AI produced a summary of where the project stands—that's the image you see here. I was about to type my next instruction when something stopped me.

I'm still on that screen. The cursor is still blinking, waiting for my input. But instead of continuing, I opened a different AI tool and started writing this.

Here's What Stopped Me

I can read that summary. I know what "OAuth token endpoints" and "filesystem cache with TTL invalidation" mean. Not because I'm particularly clever—because I've spent decades working in this specific domain. It's jargon. Trade dialect. The same way a contractor reads blueprints or an accountant reads a balance sheet.

But I'm guessing most of you looked at that screenshot and felt a familiar sensation: the polite blankness of staring at something you're clearly supposed to understand but don't. It's the same feeling you had the first time you encountered algebra. Letters mixed with numbers, "solve for x," notation that seemed designed to exclude you.

Algebra stops being hieroglyphics once you learn the grammar. Until then, it might as well be a foreign language.

Here's the thing I can't stop thinking about: I didn't write that code. The AI did. My role was to direct, review, and catch mistakes. When it forgot to handle an error condition, I noticed. When its caching logic had a gap, I spotted it. When it made assumptions about my environment that weren't true, I corrected course.

Someone without my background could have the exact same conversation with the exact same AI. They'd get a tool that works. They'd never see what they couldn't see.

The Part That Made Me Pause

The fact that I'm writing this post right now—mid-project, cursor still blinking on that other screen—is itself a demonstration of what these tools make possible. I had a thought worth capturing. I pivoted to an AI assistant. Thirty minutes later, I have a draft that would have taken me half a day to produce on my own.

That's the promise. And it's real. I use these tools constantly. They are genuine force multipliers when paired with someone who understands the territory.

But speed and reduced friction come with a price: the scope of what you've created may be unknowable to you.

When everything is fast and everything works and the AI confidently delivers something that looks professional, how do you know what you're not seeing? How do you audit something you can't read? How do you ask the right questions when you don't know the vocabulary?

Creation and Judgment Are Different Skills

This isn't a screed against AI tools. I'm using them right now—to build something useful, and to write these words. The expansion of creative capability they represent is genuine and, I think, net positive.

But the ability to make something has never guaranteed the ability to evaluate it. We just never had to reckon with that much, because the difficulty of making things acted as its own filter. If you could build it, you probably understood it.

That filter is gone.

Today, anyone can ask an AI to build a customer database, an inventory tracker, a tool that handles sensitive information. The AI will deliver something that runs. It will look like real software. It will feel like a solution.

Whether it's actually secure—whether it validates inputs, encrypts data, limits access, fails safely—is a question the person who requested it often cannot answer. Not because they're careless or foolish. Because they're looking at algebra before anyone taught them what "x" means.

The Bottom Line

I don't have a clean answer. I'm not sure one exists yet.

But I think we're living through a moment that deserves more attention than it's getting. The distance between what we can now create and what we can meaningfully judge is growing fast. That gap has consequences we're only beginning to see.

The tools are democratizing creation.

They are not democratizing judgment.

What we do about that—how we close the gap, or build new safeguards, or simply learn to ask better questions—is something we should probably figure out before the algebra is everywhere and almost nobody can read it.